Fighting one of the biggest problems of eye trackers: calibration! For each individual user, eye trackers need to be calibrated which makes them just not usable for anything out of the lab. Here we propose a moving target calibration which allows to calibrate users implicitly, reliably, and without them even knowing.

Abstract

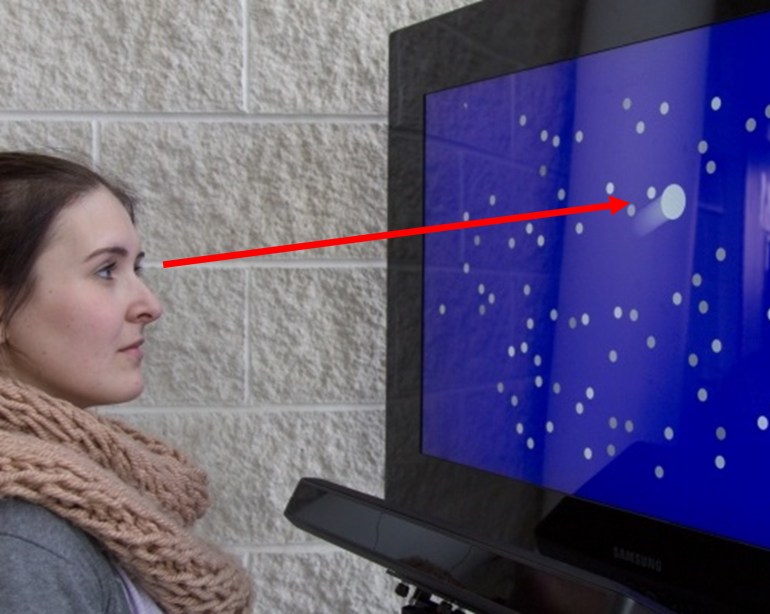

Eye gaze is a compelling interaction modality but requires user calibration before interaction can commence. State of the art procedures require the user to fixate on a succession of calibration markers, a task that is often experienced as difficult and tedious. We present pursuit calibration, a novel approach that, unlike existing methods, is able to detect the user’s attention to a calibration target.

This is achieved by using moving targets, and correlation of eye movement and target trajectory, implicitly exploiting smooth pursuit eye movement. Data for calibration is then only sampled when the user is attending to the target. Because of its ability to detect user attention, pursuit calibration can be performed implicitly, which enables more flexible designs of the calibration task. We demonstrate this in application examples and user studies, and show that pursuit calibration is tolerant to interruption, can blend naturally with applications and is able to calibrate users without their awareness.

In the talk, I discuss the research in much more detail, see here.

Pursuit calibration: making gaze calibration less tedious and more flexible

Ken Pfeuffer, Melodie Vidal, Jayson Turner, Andreas Bulling, and Hans Gellersen. 2013. In Proceedings of the 26th annual ACM symposium on User interface software and technology (UIST ’13). ACM, New York, NY, USA, 261-270. doi, pdf, video, talk