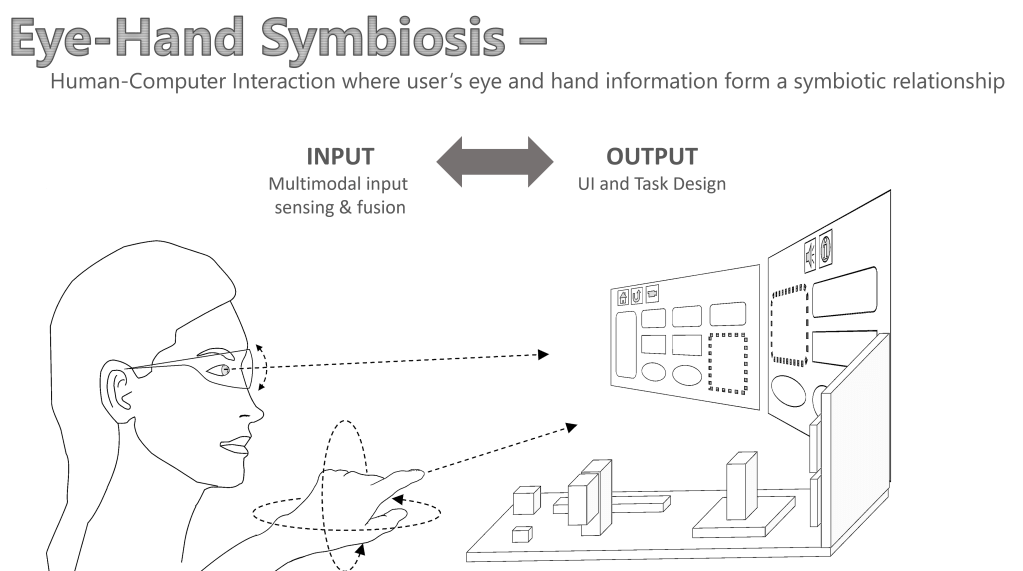

“Eye-Hand Symbiosis” is an interaction paradigm where the eyes and hands form a symbiotic relationship in the user interface to enable a novel way of human-computer interaction that extends beyond each of the individual modalities, toward a plethora of novel interactive capabilities.

Background

The computer interface so far: The eyes see, the hands act

In the history of Human-Computer Interaction (HCI), major interfaces have been based on the coordination of the two organs. The interactions follow to a clear division of labour between the two: the hands provide commands to the computer via input devices, and the eyes take the role to perceive the visual output on a screen. This has been a long-lasting paradigm that transcended the eras of computing from command-line interfaces to desktop and mobile devices, as arguably the most successful and widely available human-computer systems of our times.

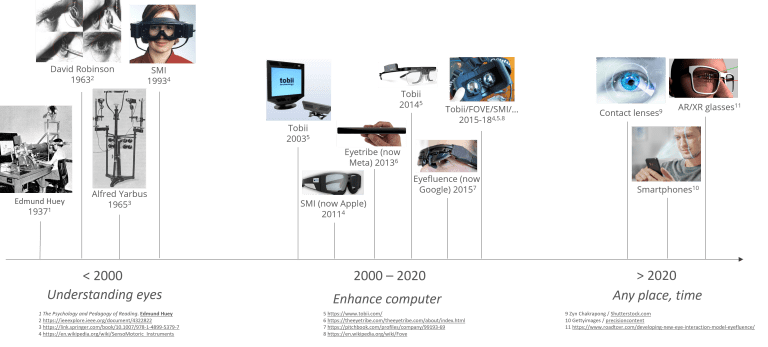

Eye-tracking technology is rapidly advancing

The particular technological advance we are witnessing is the increasingly usable, accurate, and secure way to sense what our eyes are focusing on via eye-tracking sensors. Before the millenium, eye-tracking was primarily a mean to understand how our eyes work in psychology and medical fields. Afterwards, various digital devices have been enhanced with eye-tracking sensors, and in future eye-tracked headsets, contact lenses, glasses, smartphones, and in principle any computing device.

Why the hands and eyes?

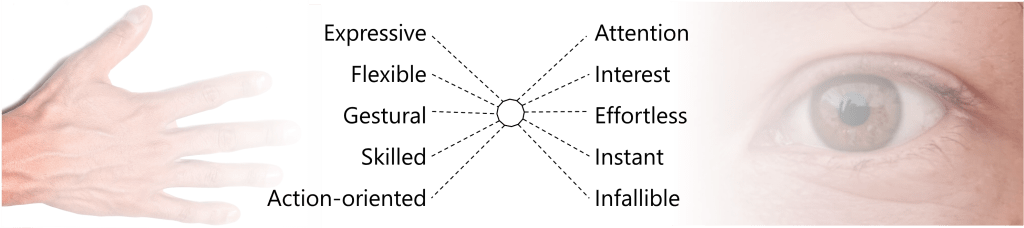

One of the most evolved relationships in our body exists between the eyes and the hands. We can intuitively coordinate what we see with what we touch, hold, and manipulate with our hands. This is not an easy feat, as each organ in itself is performing highly complex movements, often for seemingly distinct purpose. Our hands are highly expressive, flexibel, can learn skill and gesture, whereas our eyes indicate intent, attention and interest; effortlessly and instantly. Both combined, form the basis for powerful human abilities to experience and manipulate the world around us.

Characterisation of Eye+Hand UIs

In the following, I describe the core characteristics of Eye+Hand UIs, in contrast to hand-based pointing input devices.

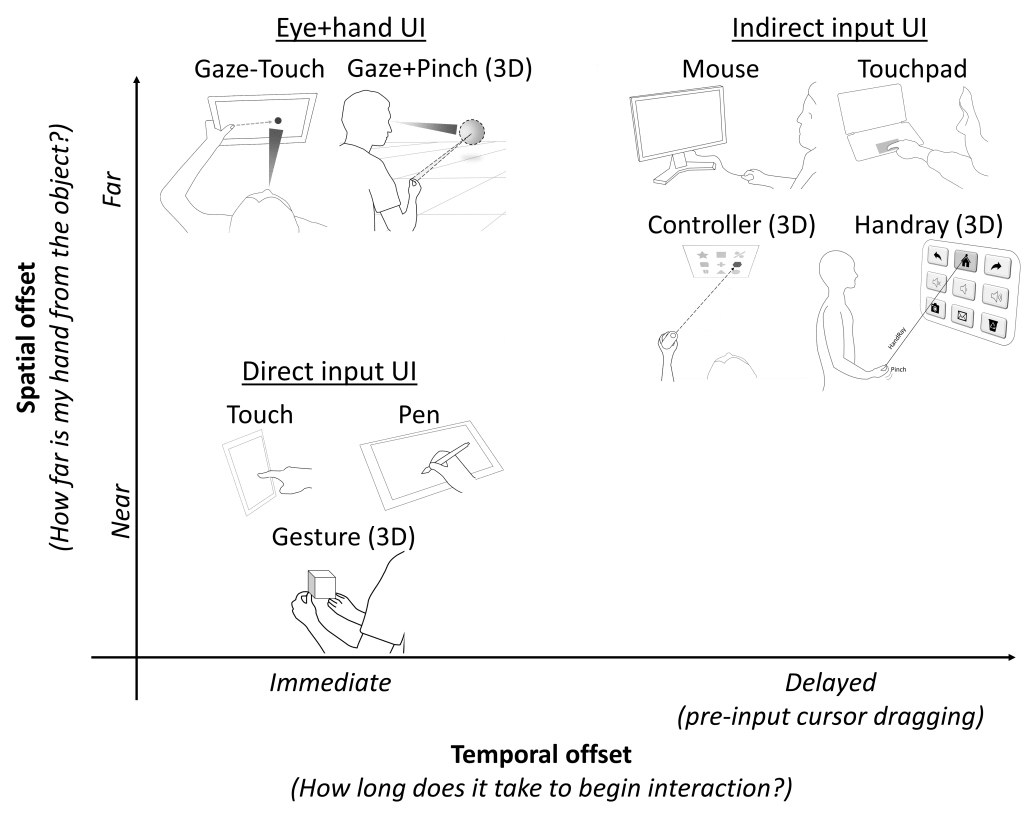

Eye+Hand UI and direct/indirect input

Hand-controlled human-computer input devices can be classified as direct and indirect. A mouse, for example, is indirect since the device is a mediator for controlling a cursor on the screen. A touchscreen is direct in the sense that the user’s input at the physical finger point immediately manipulates the underlying object (more info here / here). Furthermore, the (in-)direction of input can be decomposed to the dimensions of time & space, inspired by the degree of indirection in Beaudouin-Lafon’s work on Instrumental Interaction (CHI’00):

- Spatial indirection: distance between physical input and virtual object intended for interaction.

- Temporal indirection: the difference in time between starting the physical action and manipulating the intended object.

These two can be used to characterise the two classes of input devices as well as Eye+Hand UIs:

- Direct input devices such as a touchscreen, stylus, and 3D hand gesture are spatially and temporally direct. The hand or finger’s physical input position coincides with the virtual object that is manipulated. At the moment of physical contact, users immediately select and can initiate manipulation of the object. What’s the consequence? Direct input is often associated with benefits of simplicity of use, familiarity from a real-world metaphor of physical manipulation (“grab”, “touch”, and “drag”), and fast actions.

- Indirect input devices such as a mouse, raypointing controller and laserpointer are spatially and temporally indirect. The physical hand position is offset from the actual virtual object position. Before manipulation commences, there’s a sub-step of moving cursor to the intended location. Benefits of indirect input includes pixel-precise precision and the possibility to interact efficiently at a distance (e.g., see Forlines et al. CHI’07).

- Eye+Hand UIs based on interaction techniques of Gaze-Touch, Gaze+Pen, or Gaze+Pinch, such as the Apple Vision Pro UI, are spatially indirect and temporally direct. As such, it is fundamentally different to existing direct or indirect input methods. One can interact as quickly as with direct input devices, yet interact with any target out of reach.

UI expressiveness

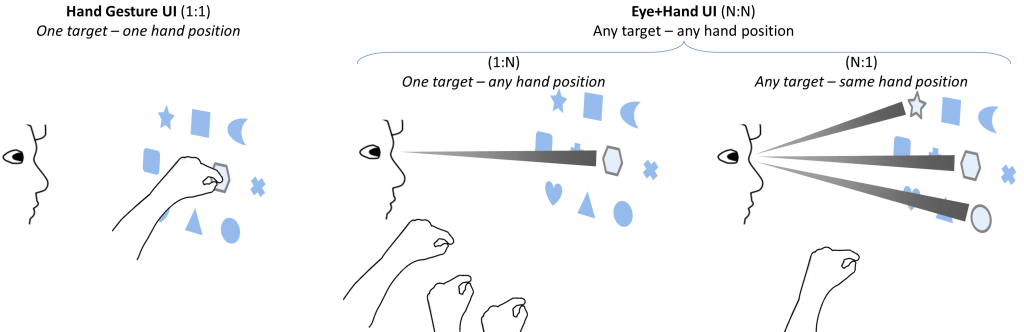

As indicated in my 2014 UIST paper, Eye-hand Symbiosis denotes an advance for potentially all manual interfaces. A fundamental aspect of advance is on the direct manipulation paradigm. Direct input devices, such as touchscreen, stylus, or 3D gesture, typically involve a 1:1 mapping between the physical input position of the device (touch point with touchscreen, 3D position at a pinch gesture), and the effect the input has on the graphical object that underlies this physical position.

- Direct input: control one object from one hand position in 3D space (1:1)

- Eye+hand UI: control any object you see, from any hand position (N:N)

This can be further decomposed into two ways:

- 1:N: For one single target in space, a user can interact from any hand position they desire. This provides flexibility of input, allows for two-handed input mappings, and variably control-display gains.

- N:1: The user can interact with any target they can focus with their eyes, by one single physical input position of the hand. This allows to reach any target from a far, using the same convenient input position. It also allows to switch context of the hand’s input at a glance.

Of course, one can have an N:N relationship when using an indirect input device such as with a mouse on a desktop PC or with a raypointing controller in VR. The interesting aspect is that the N:N relationship is now possible with direct manipulation by gestures, without the need to drag a cursor around before interaction.

Further content

The Eye-Hand Symbiosis research project covers a range of research efforts in the last 10 years, led by me.

Further content will be added in time. In the meantime, see the drop-down list in the homepage menu to dive into specific articles over the years published in scientific venues.