An exploration of 3D eye gaze interactions in VR. Focus is on what kind of capabilities one would gain when using gaze with freehand gestures. It’s different to my prior work on 2D touchscreens – less than a traditional input device, more like a supernatural ability! Lots of examples, scenarios, build with low-fidelity prototypes.

Current advances in virtual reality (VR) technology afford new explorations of experimental user interfaces in the pursuit to “identify natural forms of interaction and extend them in ways not possible in the real world” (Mine, 1995). A natural form of interaction is the use of free virtual hands, enabling direct control of objects based on analogies from the real world. Using the eyes for control, however, is not possible in the real world, although considered as efficient, convenient, and natural input for computer interfaces. We are interested in the combination of both modalities, to explore how the eyes can advance freehand interactions.

a) the concept, b) real-view, c) VR-view from user perspective

The basic idea is to bring direct manipulation gestures, such as pinch-to-select or two-handed scaling, to any target that the user looks at. This is based on a particular division of labour that takes the natural roles of each modality into account: the eyes select (by visual indication of the object of interest), and the hands manipulate (perform physical action). This resembles a familiar way of interaction: looking to find and inspect an object, while the hands do the hard work. What’s new in the formula is that the hands are not required to co-locate in the same space as the manipulated object, affording fluid free-handed 3D interaction in ways not possible before.

In particular:

- Compared to the virtual hand, users can interact with objects at a distance – enhancing the effective interaction space and allowing users to take full advantage of the large space offered by the virtual environment.

- Compared to controller devices, users are freed from holding a device and can issue hand gesture operations on remote objects as if interacting through direct manipulation. This renders the interface highly intuitive, as spatial gestures are inherently ingrained in human manipulation skill.

Fundamental interaction capabilities with the technique

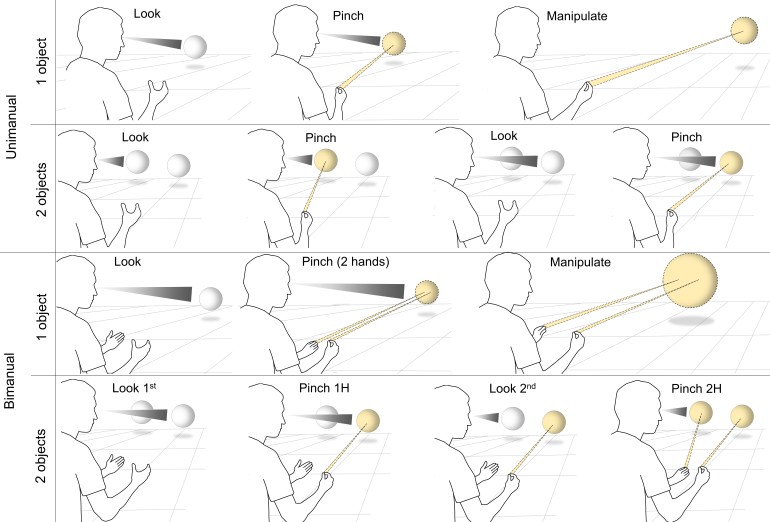

To shed light into the relation between Gaze + Pinch and direct manipulation, we first clarify high-level interaction with virtual objects. This includes uni- vs. bimanual, and single vs. two object tasks, resulting in four constellations. Note that any of these tasks can be conducted in sequence, by employing clutching, useful for instance when a scale operation is bigger than the widest stretch of a bimanual ‘pinch’ gesture. Additionally, the user can switch between unimanual and bimanual interaction without interruption, by simply releasing/pinching one of the used hands during the interaction session.

Beyond the concepts however, the value of this technique is best felt from experiencing it.

Abstract

Virtual reality affords experimentation with human abilities beyond what’s possible in the real world, toward novel senses of interaction. In many interactions, the eyes naturally point at objects of interest while the hands skilfully manipulate in 3D space. We explore a particular combination for virtual reality, the Gaze + Pinch interaction technique. It integrates eye gaze to select targets, and indirect freehand gestures to manipulate them. This keeps the gesture use intuitive like direct physical manipulation, but the gesture’s effect can be applied to any object the user looks at — whether located near or far. In this paper, we describe novel interaction concepts and an experimental system prototype that bring together interaction technique variants, menu interfaces, and applications into one unified virtual experience. Proof-of-concept application examples were developed and informally tested, such as 3D manipulation, scene navigation, and image zooming, illustrating a range of advanced interaction capabilities on targets at any distance, without relying on extra controller devices.

Conference Talk

Gaze + Pinch Interaction in Virtual Reality

Ken Pfeuffer, Benedikt Mayer, Diako Mardanbegi and Hans Gellersen. 2017. In Proceedings of the 2017 Symposium on Spatial User Interaction (SUI ’17). ACM, Brighton, UK, to appear. doi, pdf, video

How can I try it for a play? Does it ok for you to share the code in someways?

LikeLike

Hi Chi,

the code is there but likely won’t work as it is made for the Vive Gen 1 in 2017. I refer to my twitter where there’s more demo examples.

But I think about getting the code ready for the Quest Pro or so… if there’s enough need to justify the work to revive the code.

Best,

Ken

LikeLike

Thanks for your replay Ken. It will be really cool if getting ready for a play on recent devices, such as Quest 2/Pro. As you already noticed that Apple vision pro uses the similar interaction strategy (use eye tracking instead of Head movment). I will keep my eyes open on this.

Best,

Chi

LikeLike