Ever wondered that with AR glasses, you might simply look outside your window into the nature, and augmented information only appears when we really want to? Like, I am curiously gazing at that particular mountain far-away, and subtly the name and height of it appears. This question underlies this research, focusing on how our eyes may play a role to enable such scenarios. Investigating how we could achieve a neat balance between still experiencing reality without any virtual distractions, but get virtual information when we want to.

We could look at a virtual button to select it. We could show interest to a real object, like a far-away restaurant, to get context information like what type of food is available. We could also continuously view an information, to unfold more and more information. There are many ways.

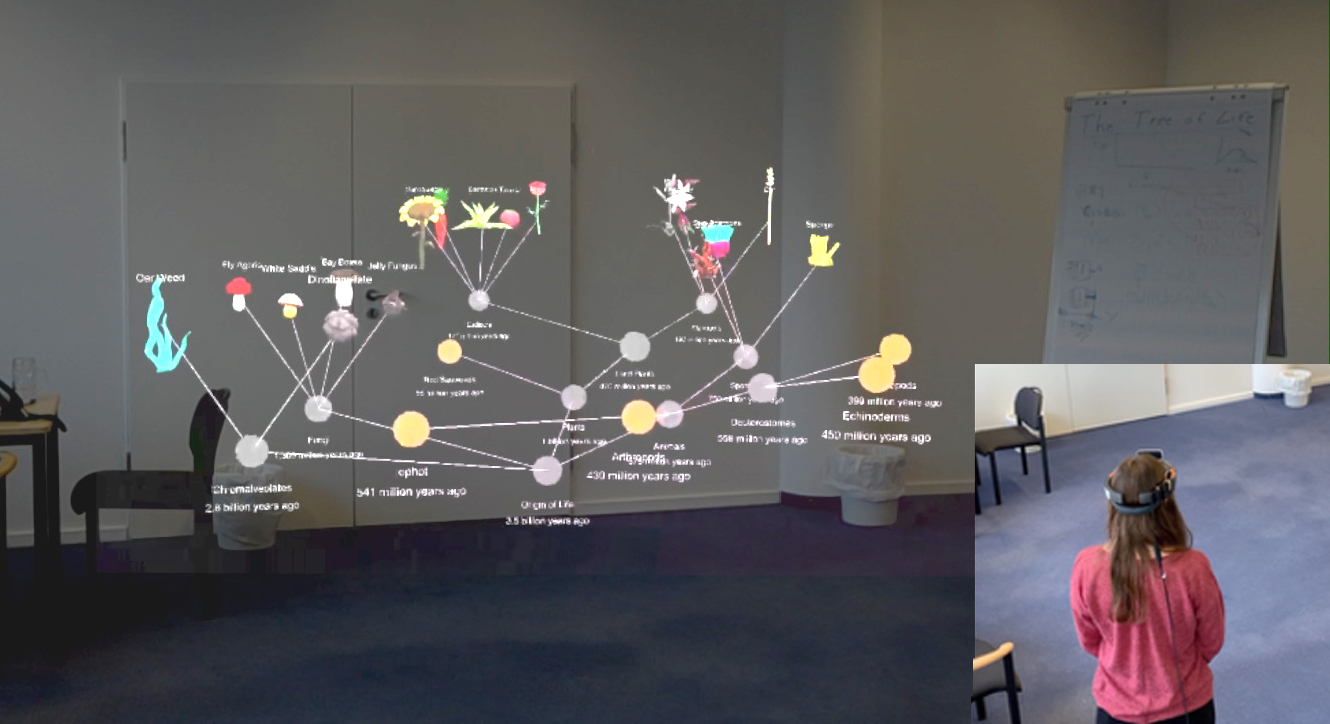

A user viewing a 3D tree model in room-size AR.

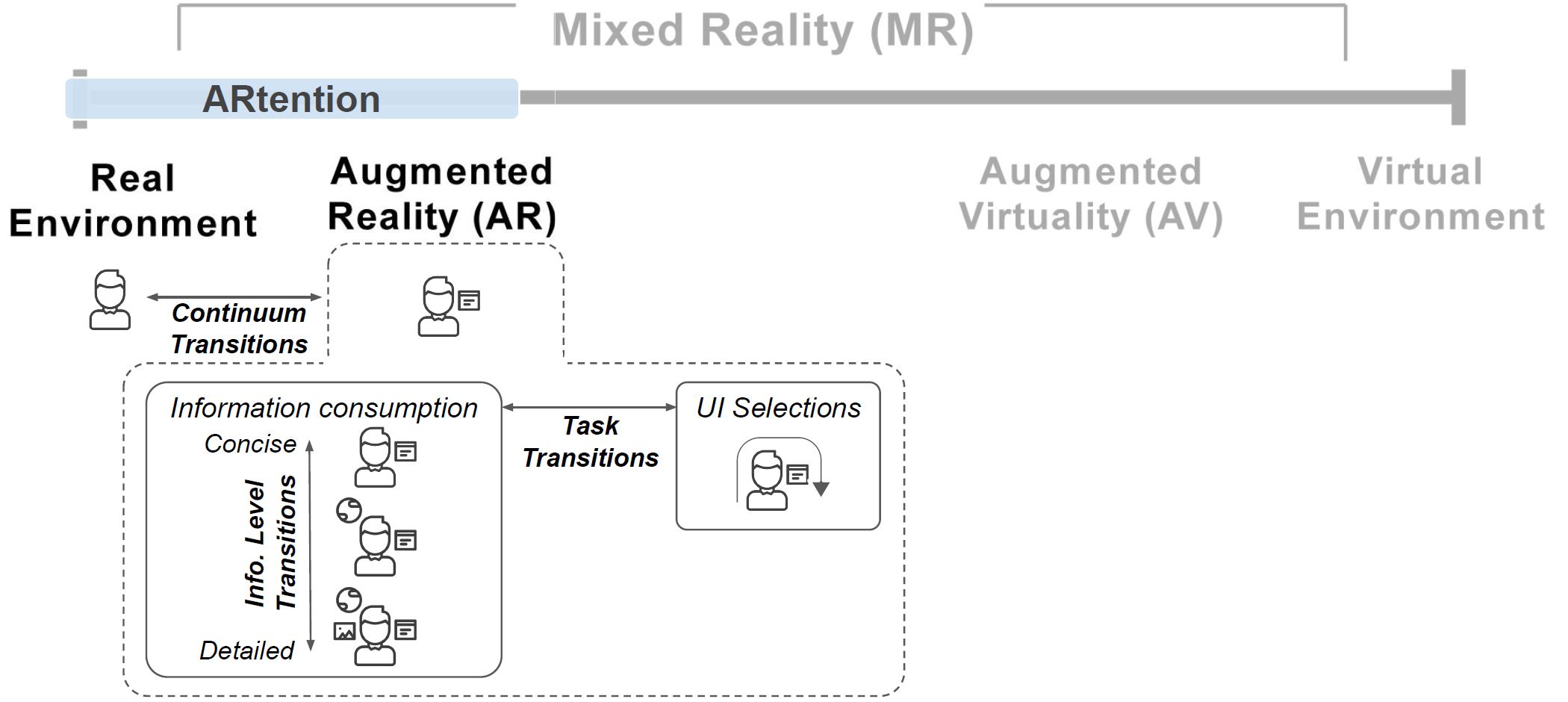

We present ARtention, a design space for gaze-input based interfaces for AR applications. This space covers three dimensions critical for designers and researchers in this space. First, understanding the role of gaze as a proxy for our engagement with real-world or AR content — reality-virtuality (RV) continuum transitions — how can we quickly access AR information, and how can we seamlessly return to the task-at-hand? Second, understanding the role of gaze as a proxy for attention on specific AR information — information level transitions — how can AR systems hint at available information, and continuously unfold this in response to our attention? And third, understanding how mechanisms such as dwell-time can still coexist in this space to offer both information level transitions and effective user interface (UI) selections that explicitly alter the system state — task transitions.

Three transitions form the ARtention design space

So what can we use this for? The idea is that AR interaction designers can think of it as three functionalities to integrate in a AR UI. First, when users look at an object, a virtual UI can appear. Then, continuous gaze can be exploited to provide more information. Lastly, buttons and areas of this UI can be used to provide dwell-time based selections. Thus, we argue that a lot more can be done with timed eye input, beyond the traditional role of selection. Our vision is that anything meaningful that we focus our eyes on in the reality, could provide a hidden UI. As we are curious and gazing around, it may become truly possible that an AR system provides us the right information at the right time by using this eye-tracking approach.

A user with augmented information

A more visible description and demos that we build in the project, are shown in the video:

Full information available in the paper:

K. Pfeuffer, Y. Abdrabou, A. Esteves, R. Rivu, Y. Abdelrahman, S. Meitner, A. Saadi, and F. Alt. Artention: a design space for gaze-adaptive user interfaces in augmented reality. Computers & graphics, 2021. doi:https://doi.org/10.1016/j.cag.2021.01.001 , PDF