We look at the menu before we interact with it – which is what we aim to exploit in this project via eye-tracking. In VR 3D design tools, users often hold a menu in one hand, while the other hand is reserved for the drawings with a pen. To change something in a menu, we build and evaluated an interface where user simply look at the mode in the menu to select it. This saves not only time and effort, but also allows the pen in the other hand to be kept where you want.

As gaze movement to a target precedes manual movement, in principle, this information can be exploited to make mode-switching easier and thus reduce the cost of secondary menu task interaction. Cost can be the time needed to perform the action, the mental load involved in switching and finding the item, or the physical exertion exhibited in hand and arm movement.

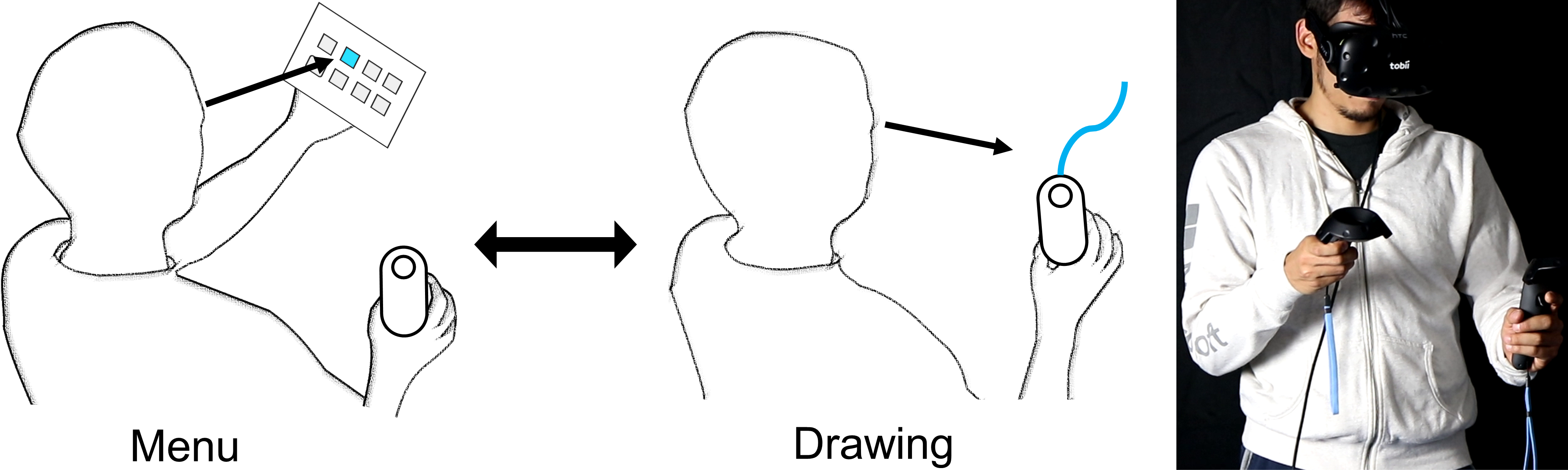

The eyes reveal when switching between menu and drawing. We research this insight in VR (right).

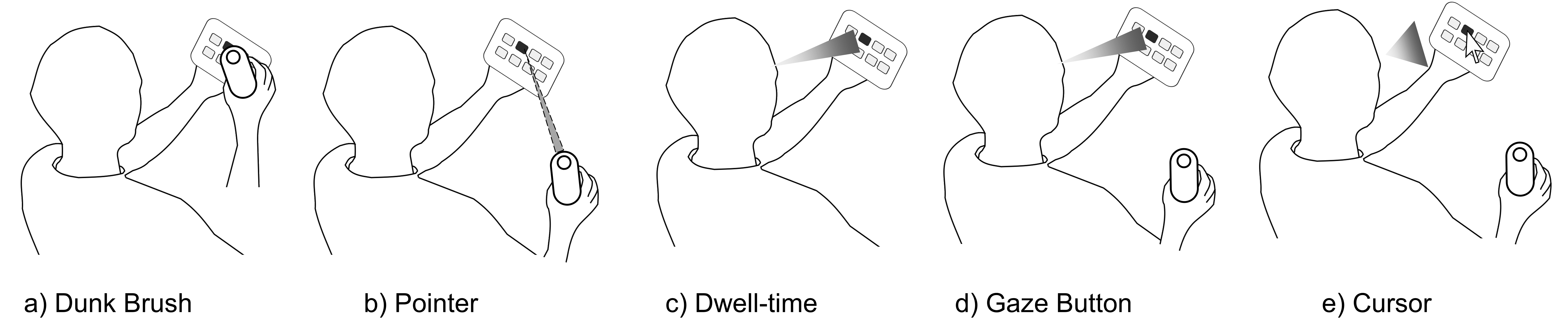

We investigate gaze interaction techniques to facilitate users in their interaction with menus in VR. For instance, a basic eyes-only approach is dwell time, where users look at the target for a specific time. This leads to fast selections, however is subject to the Midas Touch problem where it is ambiguous whether a gaze is intended to select or simply look. To account for this issue, researchers proposed gaze with manual confirmation, which is plausible in the context of VR drawing with controllers. Such multimodal techniques often adhere to trade-offs between the involved modalities, such as temporal performance vs. error rate or physical effort vs. eye fatigue. It is important to understand factors of user performance and experience that affect such techniques, to make an informed decision in the design of future UI integrating them.

Several methods to activate an item in the menu.

These investigated techniques provide a range from completely manual (controller pointing), to eyes-only input (dwell time). We describe the interaction design in detail in our concept section. The techniques are studied in a common task of switching colour modes as a secondary task, then draw lines as the primary task. We provide an extensive analysis of the study results, including performance, physical movement, coordination, and user feedback.

The findings indicate that a direct approach of a dunk brush metaphor is performance-wise one of the fastest, but trades off with higher need of physical demand. Dwell time, in contrast, has no physical effort and can be similarly fast at the expense of eye-fatigue, as reported by users. The indirect methods trade several of these factors to form unique techniques; for example, the manual-only raypointing method leads to additional movements and wrist rotation, which the multimodal techniques avoid with the integration of eye movements. Our study extends the prior knowledge by categorising and quantifying these and more characteristics of interaction techniques in the realm of multimodal gaze and manual input, useful to inform future gaze based UI.

Do you want more? See the paper / video (Note that the video/audio quality is not the best).

Ken Pfeuffer, Lukas Mecke, Sarah Delgado Rodriguez, Mariam Hassib, Hannah Maier, and Florian Alt. 2020. Empirical Evaluation of Gaze-enhanced Menus in Virtual Reality. In 26th ACM Symposium on Virtual Reality Software and Technology (VRST ’20). Association for Computing Machinery, New York, NY, USA, Article 20, 1–11. DOI:https://doi.org/10.1145/3385956.3418962