The thesis is finished! It’s the proof of 4 years of living the life of a lab-rat, it’s a manual on how to build a gaze-interactive landscape of user interfaces, it’s a most (un-)likely vision of a gaze based future, and it’s an Inception-like design space of design spaces exploration.

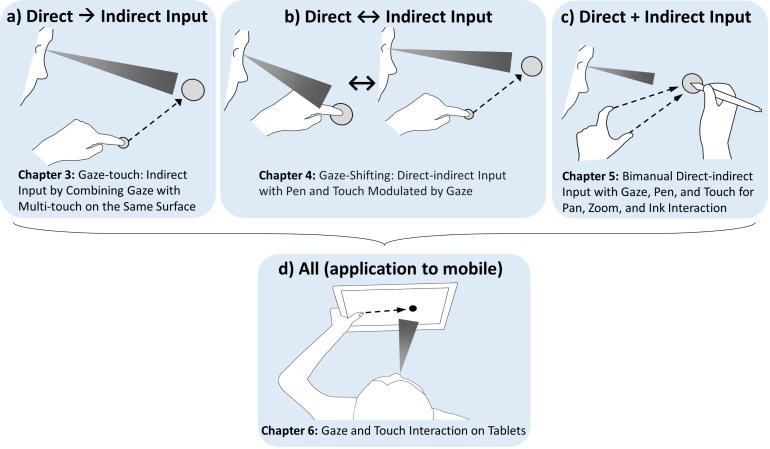

The thesis puts together four of my individual works published at UIST/CHI:

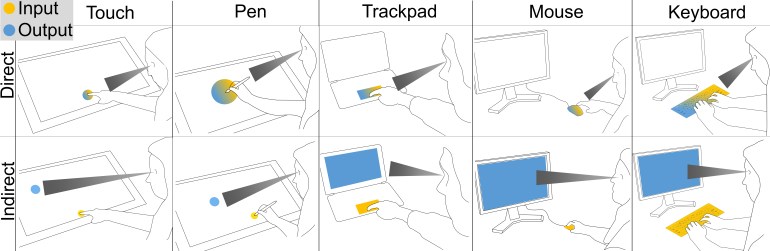

One interesting perspective here is how gaze can be investigated generally across devices, by considering direct and indirect input. All devices are either direct or indirect currently, be it touch as direct, or a mouse as an indirect input example. The novel aspect here is that by using gaze, we can render any modality either direct or indirect. Some examples:

Abstract

Direct touch manipulation with displays has become one of the primary means by which people interact with computers. Exploration of new interaction methods that work in unity with the standard direct manipulation paradigm will be of benefit for the many users of such an input paradigm. In many instances of direct interaction, both the eyes and hands play an integral role in accomplishing the user’s interaction goals. The eyes visually select objects, and the hands physically manipulate them. In principle this process includes a two-step selection of the same object: users first look at the target, and then move their hand to it for the actual selection.

This thesis explores human-computer interactions where the principle of direct touch input is fundamentally changed through the use of eye-tracking technology. The change we investigate is a general reduction to a one-step selection process. The need to select using the hands can be eliminated by utilising eye-tracking to enable users to select an object of interest using their eyes only, by simply looking at it. Users then employ their hands for manipulation of the selected object, however they can manipulate it from anywhere as the selection is rendered independent of the hands. When a spatial offset exists between the hands and the object, the user’s manual input is indirect. This allows users to manipulate any object they see from any manual input position. This fundamental change can have a substantial effect on the many human-computer interactions that involve user input through direct manipulation, such as temporary touchscreen interactions. However it is unclear if, when, and how it can become beneficial to users of such an interaction method. To approach these questions, our research in this topic is guided by the following two propositions.

The first proposition is that gaze input can transform a direct input modality such as touch to an indirect modality, and with it provide new and powerful interaction capabilities. We develop this proposition in context of our investigation on integrated gaze interactions within direct manipulation user interfaces. We first regard eye gaze for generic multi-touch displays, introducing Gaze-Touch as a technique based on the division of labour: gaze selects and touch manipulates. We investigate this technique with a design space analysis, protyping of application examples, and an informal user evaluation. The proposition is further developed by an exploration of hybrid eye and hand inputs with a stylus, for precise and cursor based indirect control; with bimanual input, to rapidly issue input from two hands to gaze-selected objects; with tablets, where Gaze-Touch enables one-handed interaction across the whole screen with the same hand that holds the device; and free-hand gesture in virtual reality to interact with any viewed object at a distance located in the virtual scene. Overall, we demonstrate that using eye gaze to enable indirect input yields many interaction benefits, such as whole-screen reachability, occlusion-free manipulation, high precision cursor input, and low physical effort.

Integration of eye gaze with manual input raises new questions about how it can complement, instead of replace, the direct interactions users are familiar with. This is important to allow users the choice between direct and indirect inputs as each affords distinct pros and cons for the usability of human-computer interfaces. These two input forms are normally considered separately from each other, but here we investigate interactions that combine those within the same interface.

In this context, the second proposition is that gaze and touch input enables new and seamless ways of combining direct and indirect forms of interaction. We develop this proposition by regarding multiple interaction tasks that a user usually perform in a sequence, or simultaneously. First, we introduce a method to enable users switching between both input forms by implicitly exploiting visual attention during manual input. Direct input is active when looking at the input, and otherwise users will manipulate the object they look at indirectly. A design application for typical drawing and vector-graphics tasks has been prototyped to illustrate and explore this principle. The application contributes many example use cases, where direct drawing activities are complemented with indirect menu actions, precise cursor inputs, and seamless context switching at a glance.

We further develop the proposition by investigating simultaneous direct and indirect input by bimanual input, where each input is assigned to one hand. We present an empirical study with an in-depth analysis of using indirect navigation in one hand, and direct pen drawing on the other. We extend this input constellation to tablet devices, by designing compound techniques for use in a more naturalistic setting when one hand holds the device. The interactions show that many typical tablet scenarios, such as browsing, map navigation, homescreen selections, or image gallery, can be enhanced through exploiting eye gaze.

PhD Thesis: Extending Touch with Eye Gaze

Ken Pfeuffer. 2017. Lancaster University, UK. doi, pdf