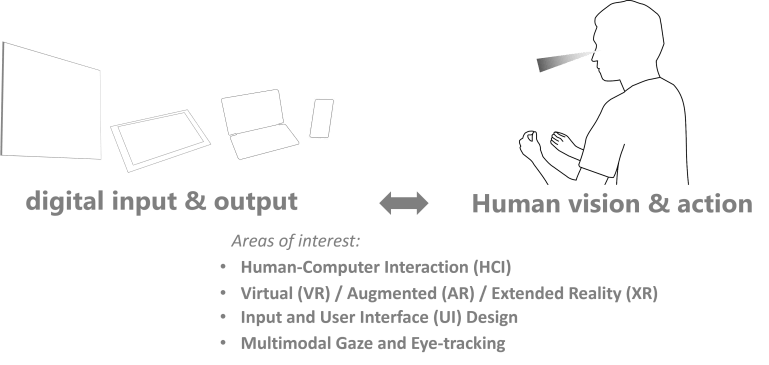

My research interests lie in the area of human-computer interaction, UI design, adaptive systems and eye-tracking. I am interested in the exploration of experimental and radically novel interface designs of the future, and rethinking how existing devices and interfaces can be augmented by integrating new technologies and sensors. This includes the design of interaction techniques for tablet computers, multimodal and AI-driven systems, virtual and augmented reality, in the pursuit to explore new interactive capabilities to empower users.

Topics include:

UI Software and Technology. Exploring novel adaptive user interfaces to increase the user’s efficiency, ergonomics, and comfort in the interaction with a computer. This includes integration of attention and touch (UIST’14-16), pen computing (CHI’16-17), 3D gesture (SUI’17) and head pointing (VR’19).

Eye-tracking. I have investigated eye-tracking calibration methods that are implicit, easy to use, and automatic (UIST’13, ETRA’19). I conducted a plethora of experiments that study visual attention in several contexts such as bimanual interaction (CHI’16), mobile scrolling (CHI’18), and VR (VRST’20).

Usable Security. I have also experience and work-in-progress in Usable Security research, such as in VR where we assessed behavioural biometric user identification based on headset and controller motion (CHI’19), and study of biometric, physical, and behavioral access methods (MUM’18).

Sketching and Design. Digital tools and techniques to enhance the user’s capability to sketch and design. At Microsoft Research I developed applications that unify stylus and multitouch input on mobile tablet devices to increase productivity (CHI’17). I built a design application that intelligently considers visual attention for graphics design tools (Best Paper Nominee, UIST’15), and investigated rapid prototyping tools for Mixed Reality (CHI’21).

AR/VR. Many of my projects are set in AR/VR. This includes eye-based multimodal input with freehand gestures (SUI’17) and handheld menus (VRST’20) as well as implicit motion tracking to identify the user (CHI’19). More recent work focuses on blending virtual/real spaces in form of effortless pervasive AR interfaces, and rapid prototyping tools to create mixed reality content (CHI’21).